Oh, The Humanities

This is the first in my two-part series series on STEM. Buckle up baby 🧪😎🧪.

How Did We Get Here?

I went to high school in the mid-2010s, which is when the push for students to pursue a career in STEM began ramping up. The narrative at the time was that there was a labor shortage across applied STEM roles and that we needed more people in computer science roles, more lab techs, more researchers, more doctors and that you’d get paid handsomely for those skills.

But that was never really true.

A lot of people who took that advice struggled to find roles in their fields post-grad because the jobs they were promised on the other end of four years weren’t there. And even if one was (is) lucky enough to land a role, they’re likely not getting paid enough for it. The junior doctor’s (and rail worker and postal worker and teacher) strike in the United Kingdom only highlights this fact further.

The tech side of things look daunting too. At some point people’s TikTok FYP pages were filled with young women filming “day in the life” vlogs of them working for both tech giants and tech startups alike, using #womeninSTEM. We were all screaming “yas! Girlboss!!1!!11!!!” for them. Months later, quite a few of them were filming their reactions to being laid off.

The STEM worlds seem so cutthroat, from the blood, sweat, and tears med school students put in with no guarantee of an internship to Elon Musk’s Twitter acquisition to the perpetual rise and fall of NFTs, the promise of having a secure job with the investment in STEM seems to be less of a promise and more high risk/maybe reward (but don’t bet on it).

I believe this is because the STEM industry is so intertwined with capitalism; the Pythagorean theorem and Newton’s Laws of Physics never change but STEM “ha[s]to be on the cutting edge of design, marketing and social networking.” The most prominent example of this at the moment is the permeation of artificial intelligence in our everyday lives. Rowan Ellis goes deep into this in her video essay The Dystopian AI-ification of Capitalism.

Mr. Roboto

From the resume sorting machines of the early 2000s, which tech producers assured were programmed with no bias (Narrator from Arrested Development voice: They were.) to the WGA and SAG strikes that started in earlier this year, artificial intelligence has hugely impacted the way we live our lives, sometimes without us even knowing.

I’m going to skip the flowery language here: robots are racist. Intentional or not, they were programmed that way.

I don’t think that subjects in school are so narrow and myopic that biology and computer science teachers passively push eugenics programs and woman-hating algorithms, but just like there wasn’t any room for binomial theorems in AP English, I have very little faith the people writing algorithms are making serious considerations about the proven racial and gendered implications of their software.

Even if they are, Ellis makes the point that the people they sell it to don’t care.

“…A lot of them don't seem to be ready to acknowledge the limitations at all but even if they did I'm not convinced them much would change because it's all well and good telling them ‘this is just a language prediction model it's not really intelligent’ because if the average person…in business leadership making decisions about the human workforce thinks it does a good enough job then they might not care about the flaws if it saves them money or increases its profit.”

The professional world is oversaturated with AI, but so is the education system.

It goes beyond the reports of kids at university getting caught using ChatGPT to write an essay. Post-covid, kids in elementary school are finding it way harder to concentrate and have little to no motivation to do so. The sociopolitical landscape created a culture of nihilism every other generation could afford to ignore, but Generation Z and Alpha can already the feel the effects that will only grow in the next ten years.

Between climate change, the economy, and robot police dogs, a lot of kids are wondering what will be left for them after college, if it’s even worth going, and if the planet they know will even be here. The loneliness epidemic and mental health crisis has also crept up on them.

Ellis’ conclusion here is that generative AI relieves some of their pressure. But it’s offloading the pressure of arts and humanities. You can’t punch numbers into a computer and come up with a whole science project, you may be able to get it to write out the steps to your experiment, but if a child is assigned a poem for English class or 500 words on the Napoleonic Wars, they’re pretty much set.

Ellis points out the issue with using generative AI is that not only does it discourage critical thinking, but it’s also riddled with misinformation and along with every form of social media, it weens us off reading longform text.

Old English

Columbia English Professor James Shapiro supports this idea. In Nathan Heller’s The End of the English Major, he explains:

“Technology in the last twenty years has changed all of us…I probably read five novels a month until the two-thousands. If I read one a month now, it’s a lot. That’s not because I’ve lost interest in fiction. It’s because I’m reading a hundred Web sites. I’m listening to podcasts.”

It’s even harder to assign work. The 19th century novel is unappealing, not because it is inherently uninteresting, but because in today’s attention economy it’s unsustainable. Essentially, we’ve gone from reading the novels to reading the Cliff’s notes to watching an “ending explained” YouTube video or listening to a podcast summarizing it to not interacting with it at all.

It’s not about using technology as a supplement to traditional or natural human ways of life anymore. It’s about leveraging technology to optimize every aspect of the every day. But that technology is using us right back. As long as we live in an attention economy where an algorithm doesn’t care if something is hurting you as long as you’re looking at it, the desire let alone the propensity to think critically and autonomously will continue to be snuffed out.

But this is what tech companies are investing in. Millions of hours of R&D in Cupertino to millions of hours of child slave labor in the Congo to millions of dollars in profit, the technology that we use comes at a heavy cost with unknown (or at least easy to ignore) consequences.

Shapiro posits that it started with Sputnik in 1958 when the National Defense Education Act took a billion dollars out of the national education budget for public schooling. He thinks it didn’t really hit the universities until the inklings of the financial crisis in 2007. That’s when funding was cut for universities. The push for STEM and the narrative that we needed more people in STEM fields started shortly thereafter.

The early 2010s was when the propaganda really started ramping up, the most condensed, obvious example I stumbled upon was this series of articles from The Atlantic called Left Brain America. The articles are essentially titled “STEM Bad????” but the body of the articles are all “actually, STEM Good!!!!!” I’m not going to do a deep dive into it, but there’s an article titled “Can Brain Drain Be Beneficial?”

From the 2010s on, humanities and social science enrollment numbers are steadily, rapidly falling. The crippling debt makes it a no brainer to do a degree in computer sciences and just take a few sociology or English literature modules on the side if you’re really interested. This isn’t any better at Ivy universities, Heller reports. Enrollment in humanities at Harvard has steadily fallen 75% in the last 15 years.

It really doesn’t matter where you go and it only kind of matters what you do. This isn’t because students aren’t interested, but after moving through the education system and life in general hearing that certain degrees are guaranteed to get you nowhere but a job at McDonalds or on the streets pushing a battered tarp around in a stolen grocery cart, it’s easy to make the logical choice and stay away. People want to go where the capital is (for first generation immigrants especially, this is sometimes more of a need than a want) and most if not all of the capital both in and out of education is going in STEM. Even if the investment in STEM turns out to be a bad choice, the investment in the image is what makes it appealing.

Picture This

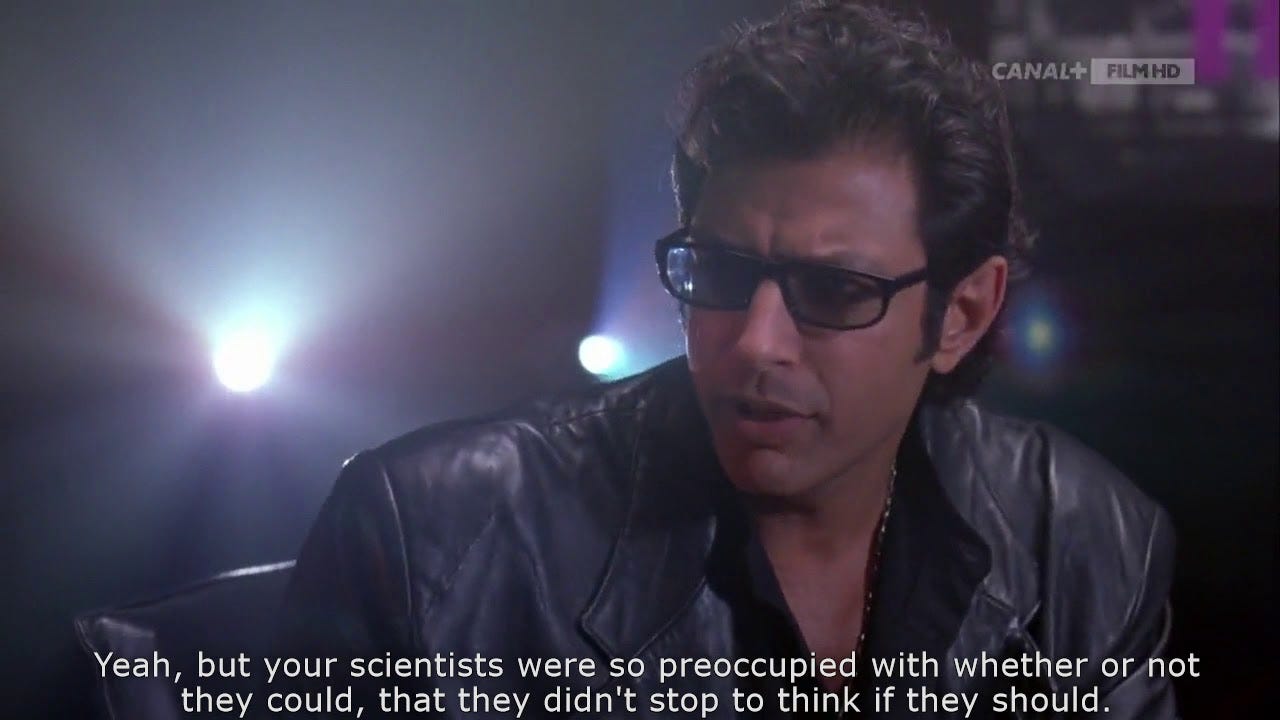

If you think about the average depiction of STEM majors post-university, the worst you can picture is The Big Bang Theory, and that’s only because those characters are annoying as hell. Tony Stark, Walter White, House, the characters on Grey’s Anatomy, Dr. Emmett Brown, science and science fiction are huge plot drivers and these stories draw audiences and money in. The characters are cool, levelheaded, arrogant at times, and some have a God-complex. Most importantly, they’re always in control even when they’re not.

Contrasting this, two prominent films come to mind where the characters’ jobs and lives are non-STEM centered. The first is the film Set It Up, where the opening montage is assistants and interns frantically running around trying to fulfill every whim and wish of their bosses.

They’re having meltdowns at service workers over salads. They’re having panic attacks at their desks before their boss aggressively beckons and they have to put on a straight face. They stop mid-sex to run to their bosses. They break up with their bosses’ partners for them. They’re carrying urine samples to their bosses’ doctors. In the midst of this, one of them snarks to a customer service agent in an attempt to get their boss bumped to first class “I’ve got a master’s in sociology and nothing else to do today so put me on hold as long as you want.”

The main characters, personal assistants Harper and Charlie bond over how far they’re willing to go for their bosses, bragging over how long they stay in the office at work. In the end, both of them end up quitting or getting fired; Harper decides to put her journalism degree to use and focus on her writing and Charlie starts working as a temp.

The other is my favorite movie, The Devil Wears Prada. Andy Sachs, an aspiring journalist applies to work at the Vogue-esque Runway Magazine after she is rejected from every other writing job she has applied for; she also turns down an offer to study at Stanford law.

She runs around Manhattan for her boss Miranda Priestly, hunting down an impossible Harry Potter manuscript and 75 Hermes scarves among other frivolities. With help from Stanley Tucci’s character, she learns to walk the walk and talk the talk of the industry, which comes at the cost of her friends and boyfriend who feel like she has been sucked into the fashion world. In the end, she gives it all up to work at a small newspaper running out of a dusty office.

These stories are honest, virtuous, and inspiring, but ultimately unglamorous. Even stories like Black Swan or Dead Poets Society or Whiplash or I May Destroy You are media depicting the beauty and power of art, but also making sure you know that you have to suffer for it1 and there’s a likelihood that you will die. This isn’t usually depicted in the zany or exhilarating way (the closest I’ve seen someone get to this recently is Todd Field’s Tar), but in the viscerally unsexy way.

The characters in Set It Up and Devil Wears Prada start off as lost twenty somethings slaving their way through corporate hell with no actual chance of working up to the positions they aspire to. Even if they have the degree, they can’t apply it to what they’re doing. Harper and Andy also have no time to do what they actually want to do because they spend almost every waking moment doing something for someone else. Contrast this with the STEM grad, who spends their day doing what they love (or at the very least what they studied) and doesn’t have to worry about going home to work on their passion project.

The humanities grad has no time to apply their degree to the real world; they have nothing to contribute. Heller interviews a student, who explains:

“You write one essay better than the other from one semester to the next. That’s not the same as, you know, being able to solve this economics problem, or code this thing, or do policy analysis…When I was applying, I kept thinking, What qualifies me for this job? Sure, I can research, I can write things. But those skills are very difficult to demonstrate, and it’s frankly not what the world at large seems in demand of.”

To me, this sounds like the idea that critical thinking and analysis is a waste of time, which is probably why conservatives push so hard for eradicating both arts and humanities out of schools and university. Thinking, more importantly thinking critically isn’t a waste of time and the lack of doing so has an effect on the way we perceive cultural critiques, analysis, sociopolitical issues and the discourse we have. My favorite way to have that discourse is through art and entertainment, but with time that could be obsolete too.

Protest and Survive2

Among other things like fair residuals for streaming and the restriction of mini-writer’s rooms, the 2023 Writers Guild of America strike is about limiting the use of AI in the creative process. The hosts of the podcast Rehash theorize that this is an aftereffect of the pandemic: streaming services were one of the very few industries to experience a boom in revenue during the pandemic, which is why there are now more insert-cable-channel-here+ platforms than ever. But as the outside opened back up, that growth started to plateau, and the C-suite execs are left scrambling, attempting to maintain the magic money they made three years ago.

Instead of using their big creative brains that apparently got them where they are today to figure out how to keep people in their jobs, they decided the best way to keep their money was to cut costs. But they also had to maintain the quality of the content they were putting out, at least to a certain extent. This is where mini-rooms, or short writing gigs that disallow writers any creative control over their intellectual property after it leaves the room comes in and AI follows right behind that.

The WGA, having the foresight and fearing the imminent i-Robot-like reality coming soon to a writer’s room near them, proposed studio-regulation on AI: no AI involvement in writing or rewriting original or existing intellectual property, no use of AI as a source material, and 2020 Minimum Basic Agreement writing materials cannot be used to train any AI. The Alliance of Motion Picture and Television Producers counteroffered the chance for WGA reps to come to a monthly meeting to discuss technological advancements in entertainment.

In the video essay TV is about to Change. Forever. Jackson from the YouTube channel Skip Intro delves into the ways technology is poised to envelope entertainment. He opens with a scene from the Comedy Central show Corporate. The scene depicts a man confronting a woman in a fluorescent, barren office space, the only furniture is a desktop setup with dated CGI inhabiting the screen. The woman explains that an algorithm writes Pickles 4 Breakfast, a popular children’s television show. As they carry out their short conversation, it produces 50 more episodes ready to air. The show’s premise is that a toddler eats pickles for breakfast, but sometimes Angry Bird-looking characters come to steal the pickles and in that instance the child whips out a sickle and chops the birds in half until it’s bathing in bird blood, munching on their well-earned pickle. It’s bad, disturbing, nonsensical, and not far from what algorithms produce in real life.

There are plenty examples online of generative AI writing movie scripts. Some of them, like the ones with prompts to write an episode of Succession or a Wes Anderson film aren’t so much good as they are generally accurate. The algorithm can prove that it knows how to write in a distinct style with plenty of examples to pull from but as the hosts of Rehash discuss, bots like ChatGPT can’t create good or even mediocre quality original scripts when prompted, it can’t even write jokes because it doesn’t have the capacity.

This isn’t because the technology just hasn’t caught up yet. No matter how much you train a robot, it’s just not going to have the human touch. Creatives can make anything out of nothing: aliens, war epics, high school musicals. They don’t have to live the experience to write about it although that can help, but maybe the most important element of creating these stories are the feelings they inject into them, their lived experiences create feelings, humor, wisdom, and so much more.

My favorite hobbies, the ones most important to me are consuming art and entertainment and critically analyzing it. I don’t hate STEM, but I am wary of how the industry has the potential to kill my hobbies. I’m scared to picture a reality where people at school and work are discouraged to engage in critical thinking, relying on a robot that can’t actually think critically to do it for them. A reality where Star Wars 57 is written by a robot that would’ve been featured in the prequels. But that’s not far off.

Critical thinking shouldn’t be viewed as something we need to optimize, we should be afforded the time and grace to think by ourselves and with other people. Critical thinking affects the way we treat ourselves and our communities. It is essential to the way we make and view art. It is sacred, and it should be treated as such.

what’s your favorite high school film about a school administration cutting arts funding and the plucky gang of outcasts fighting to get it back??? Mine are Lemonade Mouth and Bring It On Again.

I just want to lie around, sparsely clothed and eating grapes, discussing philosophy about shadows in cave walls and what not - much like the ancient Greeks (maybe even include women and POC too!). It seems we need to prove our worth to university boards, future employers, and even potential students. I think it might be possible to retain what makes the Humanities special while also appearing 'appealing' to students and employers (I know we shouldn't have to do that but here we are 😞). It honestly could be as easy as changing the names of programs, instead of 'Anthropology' we call the university program 'Participatory Research Methods' (or what I did, changed the degree name to 'MSc in Digital Ethnographic Research Methods on my resume lmao). There really are skills that Humanities teach that are very useful for employers but it seems that most university professors, administrators, etc. are wholly unqualified to guide students in how to leverage their skills (unless it's ONLY TO GO INTO ACADEMIA!!!).

Anyway, I will lie passively and accept the inevitable permiation of AI into my every orifice (I already use it to do 40% of my work).

MAYBE it'll turn out OK? Maybe AI will take absolutely all the jobs so we finally get universal basic income and all that humans do all day is lie around eating grapes imagining thought experiments about trams.